Agent Control Plane 2026: The Race for the Harness

Between 8 and 29 April 2026 six of the most important AI and cloud vendors released their own agent harness as a product. An engineering discipline became a procurement category. Whoever decided in 2025 which model to use now also has to decide which control plane runs their agents, on which pricing model and with which sovereignty profile.

Within four weeks Anthropic Managed Agents (8 April), the OpenAI Agents SDK update (15 April), Snowflake Cortex Code (21 April), Salesforce Agent Fabric (21 April), Google Gemini Enterprise at Cloud Next 2026 (22 April) and Guild.ai with a 44 million US dollar Series A (29 April) all launched. In parallel, Stanford's Meta-Harness paper reaches 76.4 percent on Terminal-Bench 2.0, showing that harness configurations can be optimized automatically. For mid-market and enterprise IT this means four business models now run side by side, from pay-per-session and open source to vendor-neutral control planes. The platform choice locks in two to three years and should be made before any new productive agents are deployed.

What changed in four weeks

Between 8 and 29 April 2026 six of the most important AI and cloud vendors released their own agent harness as a product. An engineering discipline became a procurement category within one month. That changes platform roadmaps in IT departments across mid-market and enterprise alike.

Anthropic Managed Agents

Public beta of the fully hosted agent runtime, billed at 0.08 US dollars per active session-hour. Launch customers include Notion, Rakuten, Sentry and Asana.

OpenAI Agents SDK update

The Codex harness becomes open source and ships through the updated Agents SDK. Cloudflare Agent Cloud offers a ready-to-deploy hosting variant.

Snowflake Cortex Code and Salesforce Agent Fabric

Snowflake anchors the control plane in the data platform. Salesforce delivers Agent Fabric as a multi-vendor governance layer for CRM workloads.

Google Cloud Next 2026: Gemini Enterprise

Google positions the Gemini Enterprise Agent Platform as the central control plane and announces a 750 million US dollar partner ecosystem investment.

Guild.ai Series A

Backed with 44 million US dollars from Google Ventures, NFX, Acrew, Khosla, Scribble and Webb, Guild.ai launches as a vendor-neutral, model-agnostic alternative to the hyperscalers.

What used to be an engineering task in platform teams becomes a platform decision in IT strategy in Q2 2026. The choice locks in sessions, memory format, tool registry and audit logs. A later migration is significantly more expensive than swapping a model.

What an agent control plane delivers

An agent control plane handles the work that traditional cloud stacks split across Kubernetes, service mesh and IAM. It starts, monitors, throttles and ends agents, runs audit logs, manages permissions, distributes tools through the Model Context Protocol and ensures rollbacks. Unlike a pure model endpoint it is stateful and long-running.

Governance

Who is allowed to deploy which agent against which system, who reviews actions, which limits apply per team and use case.

Security

Sandboxing, secret management, approval steps for critical actions and an emergency stop for runaway sessions.

Observability

Trace storage per session, deterministic replays, per-session cost accounting, anomaly detection.

Tool registry

Unified access to MCP tools and A2A protocols, versioning per tool, approval workflow for new tools.

Memory

Memory hierarchy across conversation, project, organization and global knowledge base, with retention and deletion policies.

Billing

Per team, per agent, per use case. Token costs and runtime costs tracked separately, with quotas and alerts.

The link to the established discipline of harness engineering is tight. The harness is the software layer around a single AI model. The control plane manages many such harnesses centrally. Put simply: the harness is the engine, the control plane is the workshop with maintenance records.

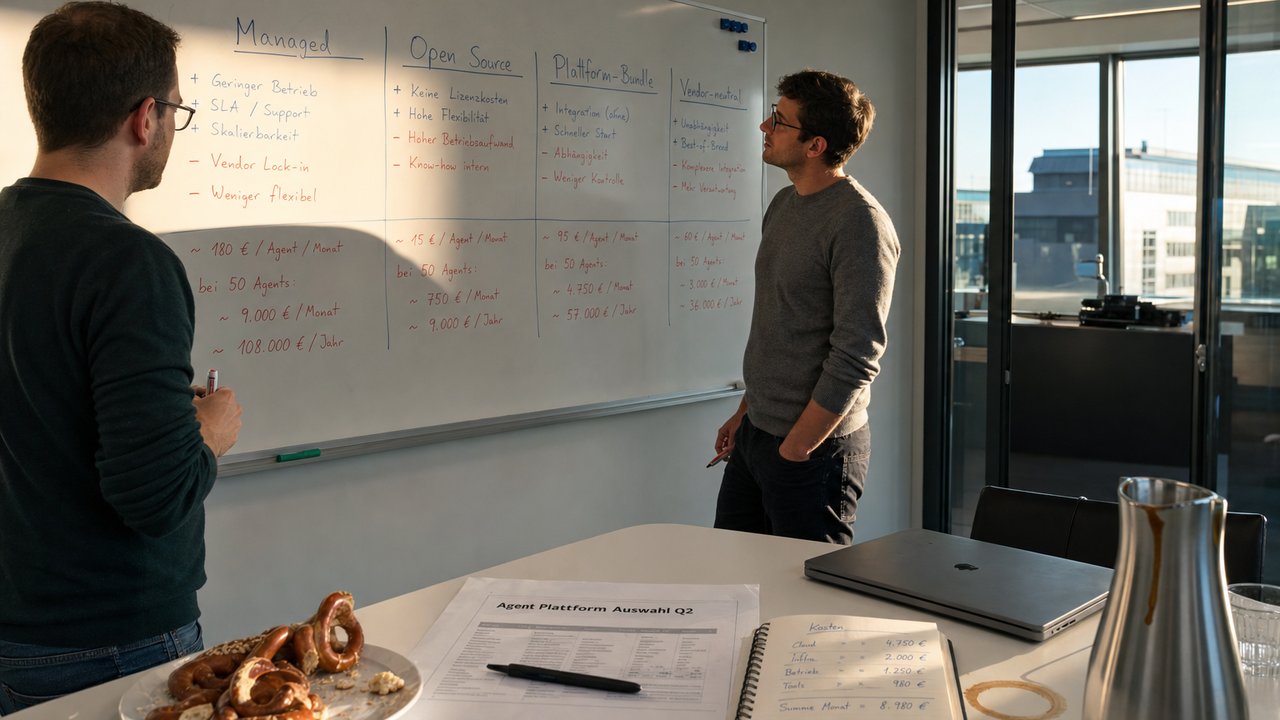

Four business models running in parallel

Vendors agree that the harness is the product. They disagree on how it should be paid for. Four models now sit side by side in the market, each suited to different use cases and risk profiles.

| Model | Vendor | Pricing logic | Strength | Lock-in risk |

|---|---|---|---|---|

| Pay-per-session | Anthropic Managed Agents | 0.08 USD per active session-hour, tokens on top | Predictable, fast onboarding | High (hosting only at Anthropic) |

| Open-source harness | OpenAI Agents SDK, Codex harness | Token consumption, hosting borne by you | Full control, no platform markup | Medium (tool format tied to OpenAI) |

| Platform bundle | Google Gemini Enterprise, Snowflake Cortex Code, Salesforce Agent Fabric, Microsoft | Part of a cloud or SaaS contract | Integrated into existing platform | Very high (cloud strategy follows) |

| Vendor-neutral control plane | Guild.ai | Own pricing, model-agnostic | Multi-vendor, own compliance | Low (models are interchangeable) |

Anthropic, OpenAI, Google and Microsoft agree that the harness is the product. They disagree on the price.

Pay-per-session looks cheap, but adds up quickly for long-running agents. An agent that runs eight hours a day costs around 230 US dollars per month in session fees alone, plus tokens. Across 50 productive agents that yields a low six-figure annual amount before any model calls are counted. Platform bundles feel free because their cost disappears into the cloud bill, while increasing the dependency on the hyperscaler.

What Stanford's Meta-Harness paper shows

While vendors sell the harness as a product, Stanford is already automating its optimization. The Meta-Harness paper published on 30 March 2026 (Yoonho Lee, Roshen Nair, Qizheng Zhang, Kangwook Lee, Omar Khattab, Chelsea Finn) describes the first system that improves harness code iteratively via an LLM, reaching benchmark-leading scores in the process.

Methodologically, Meta-Harness uses a Claude Code agent as a proposer with unrestricted filesystem access to all prior configurations, traces and evaluation results. The system inspects history with standard developer tools and reasons from past failures to design changes. Its scale is roughly 500,000 times larger than classic text optimizers that work with compressed scalar rewards.

LangChain showed earlier that the same principle works without auto-optimization. Engineer Vivek Trivedy described in a blog post on 17 February 2026 how their in-house coding agent rose from 52.8 to 66.5 percent on Terminal-Bench 2.0 without changing the model, jumping from rank 30 to rank 5. The levers were a "reasoning sandwich" with deliberate compute distribution, three specialized middleware hooks for pre-completion checklists, local-context mapping and loop detection, and a clear phase split into plan, build, verify and fix.

The goal of a harness is to mold the inherently spiky intelligence of a model for the tasks we care about.

Vivek Trivedy, LangChainEuropean perspective

For European enterprises and regulated industries the new market situation cuts both ways. Pay-per-session and platform bundles simplify entry and relieve internal platform teams. At the same time the control plane concentrates with US hyperscalers, sharpening the digital sovereignty debate that the EU SEAL framework has already put in motion.

Hosting and data residency

Anthropic Managed Agents runs exclusively on Anthropic infrastructure. For industries with EU data residency requirements you must verify separately where sessions actually execute and which subprocessors are involved.

EU AI Act from 2 August 2026

High-risk obligations require traceable logs, human oversight, risk management and technical documentation. A control plane with an audit trail is a precondition for productive operation, not a nice-to-have.

GDPR and trade secrets

Sending tickets, contracts or code to a managed agent exports data. Data processing, sub-processor chains, retention periods and audit rights belong in the contract before any productive data flows.

For the European mid-market, hosted platforms lower the initial effort considerably. If you do not want to build your own platform team, Anthropic Managed Agents or Google Gemini Enterprise gets you to production faster. The price is a second cloud dependency on top of the existing hyperscaler choice. If you have already invested in a multi-cloud strategy or treat sovereignty as a hard constraint, vendor-neutral options like Guild.ai or a self-hosted Codex harness deserve a serious evaluation.

Challenges and risks

Fast time-to-market is no guarantee of stable production. Several points deserve scrutiny before productive agents are deployed on any of the new platforms.

Library maturity is another concern. Anthropic Managed Agents is a public beta, Guild.ai just launched, and Google Gemini Enterprise still has to prove its real limits under load. Going productive in 2026 means doing more than a datasheet comparison; plan your own stress tests with realistic volumes.

What companies should do now

The platform decision is on the table now. Postponing it means migrating in three to six months from a configuration that has already taken root internally. Six steps lead to a defensible decision.

-

Take inventory

Which agents already run, on which harness, with which tools, on which data. Without this step every platform discussion goes nowhere.

-

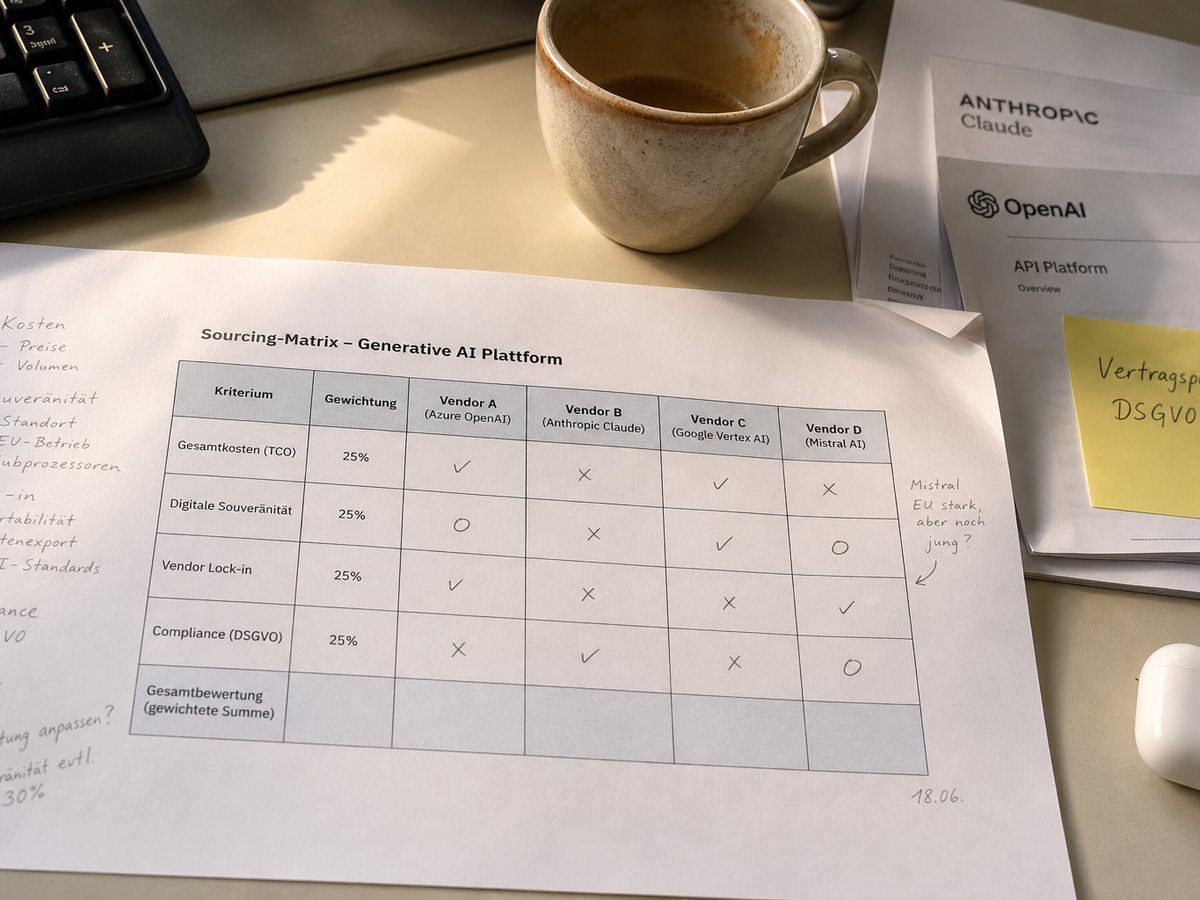

Build a sourcing matrix

Per use case, score the four models (managed, open source, platform bundle, vendor neutral) against cost, sovereignty, lock-in and compliance. One matrix per use case, no blanket verdict.

-

Pilot two vendors in parallel

Run identical tasks and identical evals against at most two platforms. Terminal-Bench 2.0, ARC-AGI-3 or your own task benchmark deliver comparable numbers.

-

Name a governance owner

One person from IT security or compliance owns the audit-trail review and the emergency stop. Without an owner the audit log is just a data graveyard.

-

Run contract review with data protection

Data processing, residency, sub-processors, audit rights and exit clause must be checked before any productive data flows. A missing exit clause hurts most later.

-

Watch the research

Stanford Meta-Harness is open source. If you plan to optimize your own configurations, keep an eye on the approach. Treat it as a tool, not as a copy-paste production recipe.

Further reading

Frequently asked questions

An agent control plane is the runtime infrastructure that starts, monitors, throttles and ends AI agents. It manages permissions, runs audit logs, distributes tools via the Model Context Protocol and ensures rollbacks. Unlike a pure model endpoint it is stateful and long-running.

Anthropic Managed Agents costs 0.08 US dollars per active session-hour, billed to the millisecond. Idle time is free. Token costs apply on top at standard Claude API rates. There is no flat monthly fee and no per-agent license. A productive agent running eight hours a day generates roughly 230 US dollars per month in session fees, plus tokens.

The harness is the software layer around a single AI model with tools, context curation, memory and hooks. A control plane manages many such harnesses centrally, with governance, audit, tool registry and billing. Put simply: the harness is the engine, the control plane is the workshop with maintenance records.

Meta-Harness automates harness configuration optimization. A Claude Code agent acts as a proposer with filesystem access to all prior configurations, traces and evaluation results, using up to 10 million tokens of diagnostic data per optimization step. With Claude Opus 4.6 the system reaches 76.4 percent pass rate on Terminal-Bench 2.0 (rank 2), with Haiku 4.5 it ranks 1 in the Haiku category. The takeaway: harness optimization is itself a solvable optimization problem.

The choice depends on sovereignty, compliance and lock-in priorities. Pay-per-session models such as Anthropic Managed Agents simplify entry but tie hosting to the US. Open-source harnesses like Codex maximize control but require a platform team. Platform bundles such as Google Gemini Enterprise fit when the cloud strategy is already fixed. Vendor-neutral options like Guild.ai offer multi-vendor flexibility but are young. A sourcing matrix per use case is the more defensible method than a blanket verdict.

From 2 August 2026 the EU AI Act high-risk obligations apply to Annex III systems. A control plane with traceable logs, human oversight and risk management is a precondition, not a bonus. Important: audit logs without a review process do not satisfy the requirement. The "human oversight" duty means a documented process with named owners, not just file-writing.