Harness Engineering 2026: The Blueprint Around AI Agents

Harness engineering is the new discipline companies use in 2026 to build reliable AI agents. Anthropic, Thoughtworks, HumanLayer and Philipp Schmid all point in the same direction: the difference is decided in the framework, not in the model.

Harness engineering is the deliberate shaping of every component around an AI model: tools, context curation, feedback loops and sub-agents. Anthropic's own experiments show that a better framework raises task success on complex coding tasks by a factor of two to three without changing the model. On Terminal Bench 2.0 Claude Opus 4.6 moves from rank 33 to rank 5 depending on the environment. For European companies this means that running productive AI agents in 2026 requires documenting and measuring the harness, not just picking the model.

What is harness engineering

Harness engineering is the discipline of deliberately designing everything around an AI model: tools, context curation, feedback loops, memory, safety hooks and sub-agents. The term took hold in the first quarter of 2026 once Anthropic and OpenAI adopted it in their engineering communication. Its core thesis is simple. Differences between top models on static benchmarks are shrinking while differences in the runtime environment around them are growing. Running productive agents in 2026 means steering both, not only the model.

Philipp Schmid set the frame on 5 January 2026 with an analogy that has since shaped the discussion. The model is the CPU, the context window is the RAM, the agent harness is the operating system and the actual agent is the application. Picking only a model is like buying a computer without an operating system. Growing the context window is like adding RAM without a kernel. Only the interplay of all layers produces a working system.

Cobus Greyling frames the evolution in three phases. First SDKs and frameworks that made agents possible as scaffolded code. Then context engineering, which curated what went into the context window. Now harness engineering, which owns the entire runtime. The framework era is ending because models today handle around 80 percent of what used to require frameworks natively. The remaining 20 percent covers persistence, deterministic replay, cost control, observability and error handling. That is where the harness lives.

The building blocks of an agent harness

A harness is made of recurring components, each with a single clear job. Birgitta Boeckeler of Thoughtworks published the clearest taxonomy on 2 April 2026. She distinguishes between guides that intervene before an action and sensors that give feedback after an action. Both can be deterministic, such as linters and type checks, or inferential, such as semantic validation by another AI model. The distinction helps teams allocate cost and effort on purpose.

Tool orchestration

File system access, shell commands, web search and API calls over a defined protocol such as MCP. Fewer tools are often better because they make selection easier.

Context curation

Long sessions save state in JSON progress files or git history. New sessions read these files and reconstruct status in seconds rather than minutes.

Permission model

Claude Code runs in read only mode by default. Every write requires explicit approval or a pre declared rule that allows the action.

Hooks

Scripts that run before or after an action. Typically tests, linters and formatters that catch errors before they land in a commit.

Sub agents

Specialised helpers with their own context window. They take on research, testing or review and keep context rot out of the main agent.

Sandbox and snapshots

Every change is reversible and every execution runs in an isolated environment. Errors can be rolled back without touching the production state.

A good harness should not aim to fully eliminate human input, but to direct it to where our input is most important.

Birgitta Boeckeler, ThoughtworksBoeckeler groups the building blocks into three regulation categories. The maintainability harness guards internal code quality. The architecture fitness harness watches runtime behaviour and non functional requirements. The behaviour harness validates functional correctness and is the least mature category in 2026. The three categories form concentric rings around the model. The further out a ring sits, the closer it is to what the user actually asked for.

What the Anthropic experiments show

Between November 2025 and March 2026 Anthropic published two detailed studies applying harness designs to complex tasks. The findings are clear. A better framework raises task success sharply, independent of the model. The two reports form the strongest evidence so far that harness investment pays back.

Two agent harness

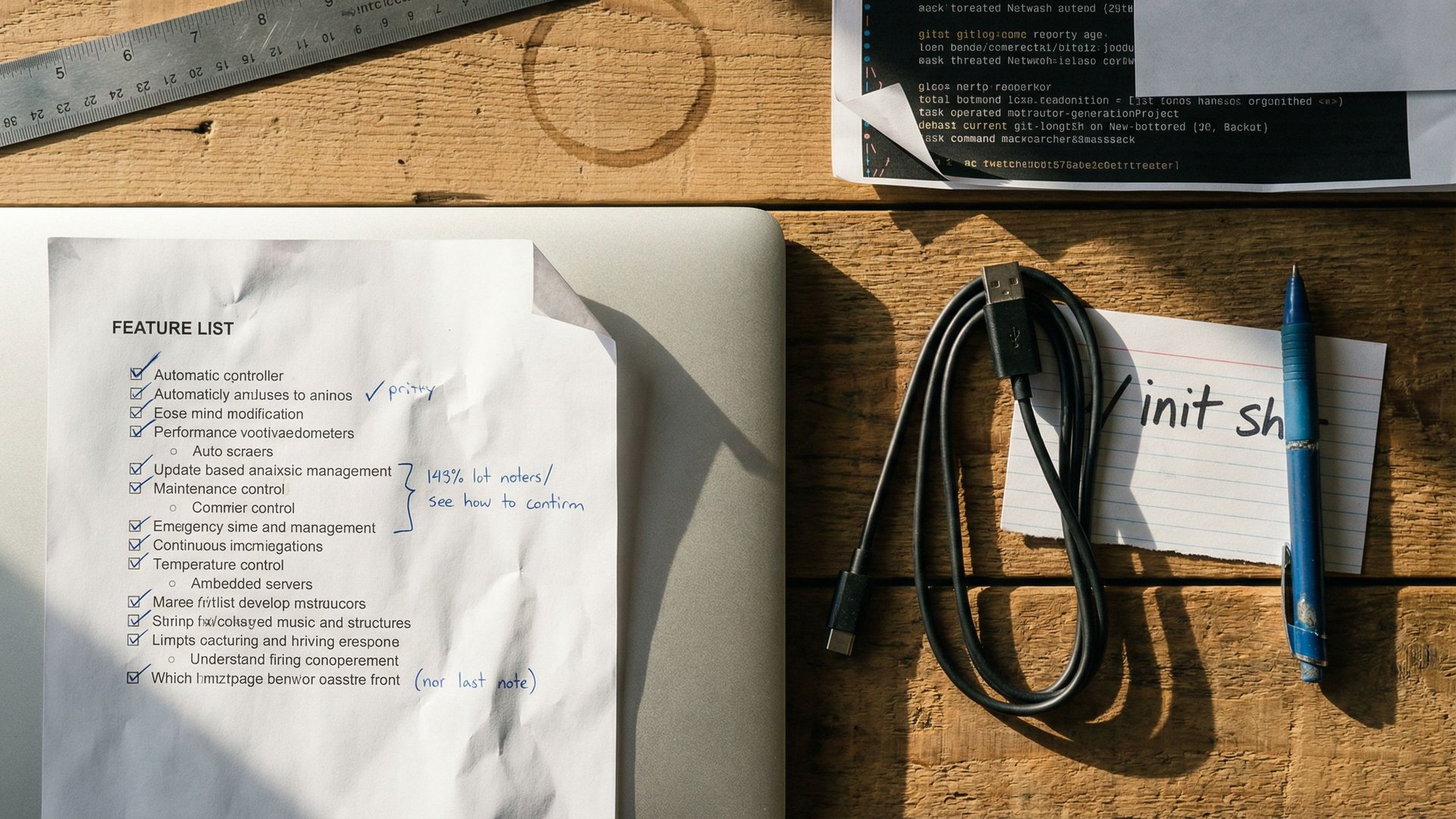

Justin Young describes an initializer plus coder setup. The initializer creates a git repo, a progress file and a feature list with over 200 entries. The coder works through the list incrementally. Each session can reconstruct the status in a few seconds.

Three agent harness

Prithvi Rajasekaran introduces a design with planner, generator and evaluator. The planner expands short prompts into detailed product specs. The generator implements incrementally. The evaluator tests with Playwright and grades against sprint contracts.

Harness engineering definition

Birgitta Boeckeler publishes the most precise taxonomy so far with guides and sensors. The Martin Fowler Blog makes the term accessible for the wider engineering community.

The concrete numbers make the effect tangible. A retro game maker produced no playable result after 20 minutes and 9 US dollars in a solo run. With a full harness the same project produced a functional app with AI features after six hours and roughly 200 US dollars. The full harness costs 20 times more but delivers a result that does not exist at all in the solo version. The DAW builder tells a similar story: three hours and 50 minutes with Opus 4.6 for around 125 US dollars produced a working music production app.

The technical core of the designs is a feature list as a JSON file with more than 200 granular entries, initially all marked as failing. Alongside it sit an init.sh for the environment and a claude-progress.txt for the actual progress. The agent works through the list one entry at a time, tests with Playwright or a browser MCP and commits after every successful feature. The central problem this solves is context anxiety, where models wrap up work too early when they sense they are approaching the token limit. Context resets and clear progress markers are the answer.

With a full harness, projects become possible that do not work in a solo run. Cost per run grows by a factor of 20 while success moves from zero to functional. That is not a linear investment, it is a binary decision about feasibility.

Critical studies and the overfitting trap

The new discipline is not beyond doubt. Three independent measurements show that auto generated harnesses can become expensive and that models are bound to specific environments. HumanLayer summarised the most important findings on 12 March 2026 and framed them as a warning. Harness engineering must not become an end in itself.

ETH Zurich: auto generated agent files

The research shows that LLM generated agent configuration files hurt performance and cost more than 20 percent extra. Hand written files yield only around four percent improvement on average. The lesson is that less and manual usually beats many and automatic.

Chroma Research: context rot

The longer the context window, the more response quality drops. The effect is strongest when the question and the context are semantically far apart. More context is not the same thing as better context.

The sharpest single number comes from Terminal Bench 2.0. Claude Opus 4.6 lands at rank 33 inside the standard Claude Code environment. In a different harness configuration the same model jumps to rank 5. That is 28 ranks of difference with an identical model. The gap points to model overfitting on specific harness configurations. Companies that bet on a single environment risk falling off a cliff with their next model upgrade.

Why Vercel, Manus and LangChain keep rebuilding their agents: Vercel removed 80 percent of tools from its agent after internal testing and gained stability as a result. Manus rebuilt its system five times in six months. LangChain re-architected its research agent three times in one year. The message is simple. The harness is not a one shot investment. It must iterate.

HumanLayer's practical recommendation sounds almost banal but captures the core: keep CLAUDE.md under 60 lines. Configurations shorter than 60 lines beat long guides in practice. Every additional line costs tokens and distracts the model. The way into harness engineering is not complexity, it is restraint. Add rules only when real errors appear.

European perspective

For the European mid market and enterprise customers, harness engineering shifts priorities. Anyone who used to think only about model choice, hosting and GDPR now has to describe and document the runtime environment as well. That concerns procurement, architecture, compliance and governance all at once. The strategy gap in the German mid market , where 53 percent of companies fail to scale AI, is also a harness gap.

The EU AI Act demands transparency about decisions made by AI systems. Technically that requirement covers the full chain of model plus harness, not just the model. Any company that does not document which tools, sub agents and hooks were active during a decision cannot reconstruct that decision. The harness turns from a technical detail into a compliance artefact. The European debate around digital sovereignty shifts in the same direction. Sovereignty starts with the data paths inside the harness, not at the choice of data centre.

What European companies need to do differently: The harness decides which data ends up where and when. European customers need a clear answer on whether sub agents send data to external systems or run inside their own sandboxes. Teams fighting vendor lock in on AI agent platforms will find that the harness is the real battleground for interchangeability. Open harnesses such as the LangChain Agent SDK or HKUDS OpenHarness offer practical escape routes.

Energy demand is the third European dimension. Three agent setups cost 20 times more than single shot runs and produce dramatically better results. For the operators behind the German data centre strategy , that translates into a new planning variable. Harness designs define the load curves, not the models themselves. A run with several parallel sub agents creates load peaks that networks and cooling must be sized for.

Challenges and risks

Harness engineering is not a self runner. Too much infrastructure brings complexity and vendor lock in. Too little brings context rot and results that cannot be reproduced. The balance is only found through your own measurements.

Over engineering

HumanLayer warns against configuration added too early. The recommendation is start first and add rules when real errors appear. Every additional line in CLAUDE.md costs tokens and distracts the model.

Single vendor dependency

Building your entire harness on Claude Code ties you to one platform. The leaked architecture details also show how strongly individual design choices shape behaviour.

Context rot

More context is not the same as better context. Context windows need active compaction or quality drops. Chroma Research has measured the effect across all large models.

Missing observability

Without logging of tool calls and hooks, errors are hard to reconstruct. A harness without telemetry is like an aircraft without a flight data recorder.

Skill overfitting

The agent skills reality check shows that skills work under ideal conditions and collapse in the real world. The harness decides whether the measurement is realistic.

Cost explosion

Three agent setups cost 20 times more than single shot runs. Without budget guard rails, spending escalates quickly, especially on long running projects without clear exit criteria.

Extra risk for the European mid market: The Anthropic experiments use the strongest available models. Many European companies pick smaller or open models for cost or sovereignty reasons. A harness tuned for Opus 4.6 will often not deliver the same results with Kimi or Qwen. The harness configuration is model dependent and needs to be retested for every model choice.

What companies should do now

Concrete steps that decision makers can take to approach the topic in a structured way instead of waiting for the next model release. The seven points are achievable in 90 days and require no platform decision. They draw directly from Boeckeler, HumanLayer, Schmid and Anthropic.

1. Inventory current tools and hooks

Which tools, hooks, sub agents and context sources are in use today? Undocumented setups are the most common failure. A simple table listing name, purpose, data path and owner is a sufficient starting point.

2. Start small and keep CLAUDE.md under 60 lines

A minimal configuration with few tools and a short CLAUDE.md beats the Swiss army knife. Every additional rule must address a real failure, not a hypothetical one.

3. Build feedback loops

Tests, linters and type checks are deterministic sensors that work without AI and verify every action. They are cheap, fast and reliable. Every pipeline should have at least one deterministic check per action.

4. Introduce progress files for long projects

Long running projects need an external progress file so that new sessions can catch up quickly. A claude-progress.txt alongside the git history is the simplest entry point. It captures the current state, the next steps and open questions.

5. Capture metrics

Success rate per task type, cost per task, token spend and context reset count. Without these four numbers every harness debate is opinion, not engineering.

6. Review the harness every 90 days

An architecture review every three months with an eye on new models and real task feedback. The harness is not a one off investment, it is a living part of the platform. Manus, LangChain and Vercel have all rebuilt theirs multiple times. That should be the template.

7. Benchmark across at least two environments

Terminal Bench 2.0 shows that models perform very differently across harness setups. Benchmarking Claude Code against a second framework exposes overfitting and dependencies quickly and guards against surprises on the next model upgrade.

Harness engineering is not an innovation project but a standard engineering discipline for productive AI agents. Starting now builds measurement, governance and observability in one pass. Waiting means watching 40 percent of your agent projects get scrapped two years from now, just as Gartner predicts.

Conclusion

Harness engineering is the discipline that will decide in 2026 whether an AI agent becomes a product or stays an experiment. The primary sources are unambiguous. Anthropic shows in two studies of its own that a better framework raises task success by a factor of two to three. Birgitta Boeckeler delivers the clearest taxonomy with guides and sensors. Philipp Schmid coins the operating system metaphor. HumanLayer and ETH Zurich warn rightly against over automation.

For European decision makers the strategic debate shifts. The question is no longer which model you pick but which framework you run in which governance with what documentation. The EU AI Act, data centre planning, the sovereignty debate and the German mid market statistics all meet at the harness. Teams that master it gain a concrete technical lever. Teams that ignore it will be talking about cancelled projects in 2027.

The good news is that the entry point stays small. A short CLAUDE.md, a progress file, a handful of deterministic tests and an inventory list. That is the first 90 days. After that come measurement, iteration and reviews. Harness engineering is not a science waiting for a breakthrough. It is an engineering discipline that improves through application and does no harm through restraint.

Further reading

Frequently asked questions

Harness engineering is the discipline of deliberately designing everything around an AI model: tools, context curation, feedback loops, memory, safety hooks and sub-agents. The term took hold in early 2026 after Anthropic and OpenAI adopted it in their engineering communication. It describes the runtime environment around a language model that coordinates tool calls, compacts context, enforces safety and persists session state over time.

Differences between top models on static benchmarks are shrinking while differences in the runtime environment are growing. Anthropic's own research shows that better harnesses raise task success rates on complex coding tasks by a factor of two to three without changing the underlying model. On Terminal Bench 2.0, Claude Opus 4.6 moves from rank 33 to rank 5 depending on the harness configuration. That demonstrates that the environment, not the model, decides outcomes.

Birgitta Boeckeler of Thoughtworks defined harness engineering on 2 April 2026 as the interplay of guides and sensors. Guides intervene before an action and prevent problems, sensors observe after an action and enable self correction. Both can be deterministic, such as linters and type checks, or inferential, such as semantic validation via another AI model. The distinction helps teams allocate cost and effort on purpose.

Anthropic published two studies. On 26 November 2025, Justin Young described a two agent harness with an initializer and a coder that works through a feature list of more than 200 entries. On 24 March 2026, Prithvi Rajasekaran presented a three agent design of planner, generator and evaluator. A retro game maker produced no playable result after 20 minutes and 9 US dollars in a solo run. With a full harness the same project produced a functional app with AI features after six hours and around 200 US dollars.

An ETH Zurich study shows that automatically generated agent configuration files hurt performance and cost more than 20 percent extra, while hand written files yield only around four percent improvement on average. Chroma Research demonstrates that response quality degrades as context length grows, an effect known as context rot. Vercel removed 80 percent of tools from its agent and gained stability as a result.

First, inventory the current tool and hook landscape. Second, start small and keep CLAUDE.md under 60 lines. Third, add feedback loops with tests, linters and type checks. Fourth, use progress files for long running projects. Fifth, capture critical metrics such as success rate, cost per task and context reset count. Sixth, review the harness every 90 days. Seventh, benchmark models across at least two environments because Terminal Bench 2.0 shows that the same configuration performs very differently.