Vibe Coding Under Fire: AI-Generated Code Produces Growing Security Vulnerabilities

74 documented CVEs, a security pass rate of 55 percent, and an NCSC chief warning of "intolerable risks." The productivity promises of AI coding tools are real, but the price is becoming visible.

AI coding tools like Claude Code and GitHub Copilot produce measurable security vulnerabilities in production code. Georgia Tech researchers have documented 74 CVEs directly traced to vibe coding since May 2025, with 35 in March 2026 alone. Veracode's Spring 2026 study shows the security pass rate of AI-generated code stagnates at around 55 percent, despite massive improvements in syntax and functionality. The UK NCSC urges enterprises to build deterministic security controls around AI-generated code rather than trusting one AI to check another.

Vibe Coding Produces Real Security Vulnerabilities, and the Numbers Are Rising

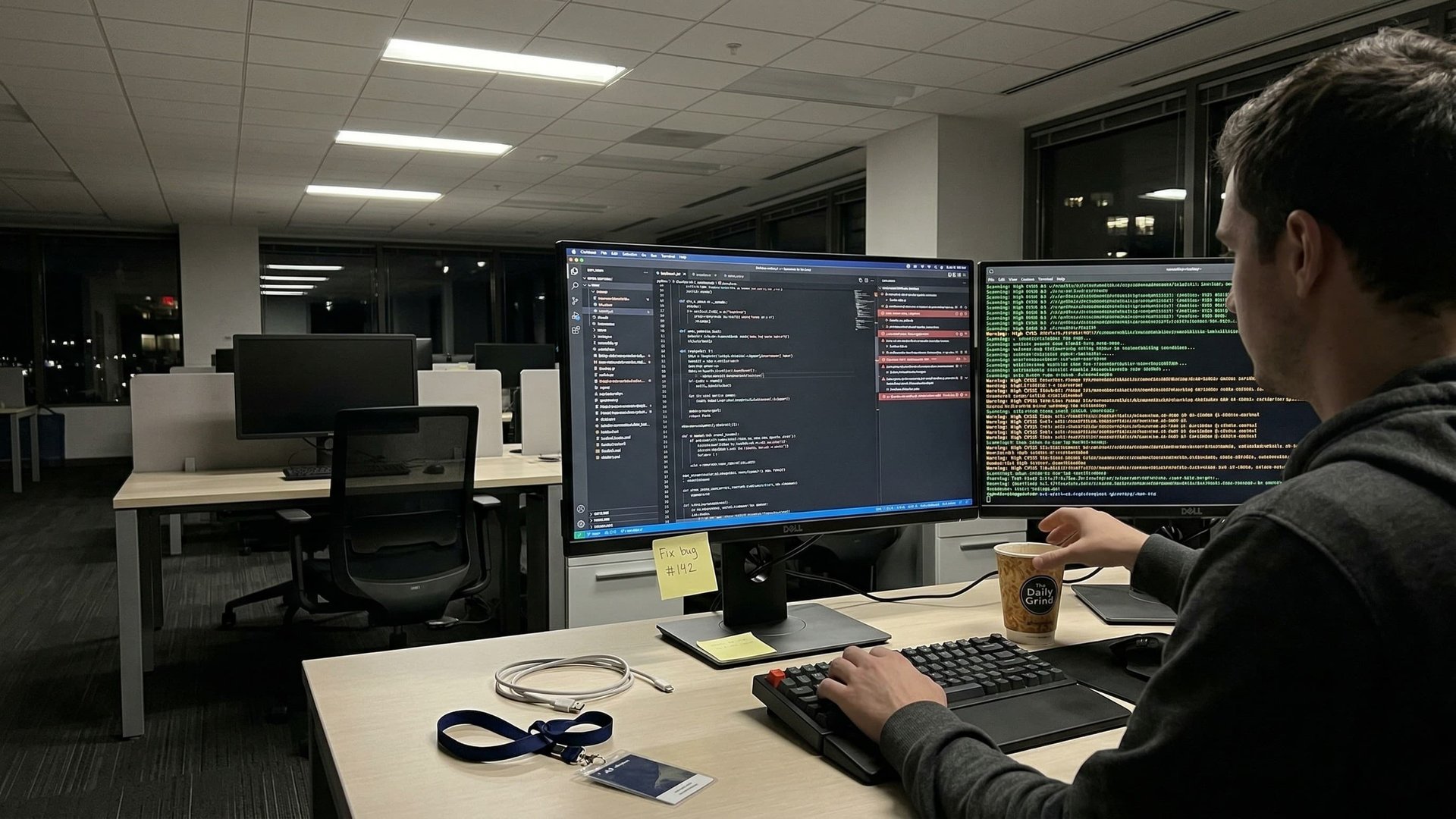

AI coding tools deliver real productivity gains. Developers report enormous time savings. But in parallel, a problem is growing that many enterprises have not yet recognized: vibe coding introduces measurable security vulnerabilities into production systems.

Researchers at Georgia Tech's SSLab have operated the "Vibe Security Radar" since May 2025, systematically tracking CVEs (Common Vulnerabilities and Exposures) directly attributable to AI coding tools. The results are alarming.

The actual number is estimated to be 5 to 10 times higher, as many AI tools leave no metadata traces in commits.

Breaking down by tool: Claude Code leads with 49 CVEs (11 rated critical), followed by GitHub Copilot with 15 CVEs. These numbers do not reflect the actual risk distribution, however. Claude Code, as a CLI tool, leaves clear metadata traces in commits. Copilot's inline suggestions are invisible in commit history.

Why AI Tools Write Insecure Code

The security problems of AI-generated code are not a temporary shortcoming that will resolve itself with the next model generation. They have structural causes.

Veracode's Spring 2026 study delivers the central insight: the security pass rate of AI-generated code sits at around 55 percent , virtually identical to the figure from two years ago. Syntax and functionality have improved massively. Security has not.

LLMs learn from public code repositories that historically contain insecure patterns. String concatenation for SQL queries, missing input validation, outdated authentication patterns - all of this gets reproduced because it appears frequently in training data, not because it is correct.

Java code is particularly affected. Models reproduce legacy patterns that predate modern security frameworks. An LLM cannot assess whether a pattern is secure. It generates what is statistically likely, not what is correct.

Reasoning models such as GPT-5 with extended reasoning achieve 70 to 72 percent . Better, but still far from the level required for production code in regulated industries.

The Trust Paradox: More Usage, Less Trust

The developer community uses AI tools intensively while simultaneously losing trust in their output. This is not a contradiction but an expression of growing experience.

Stack Overflow's 2025 Developer Survey, based on over 49,000 participants, paints a clear picture: 84 percent of developers use AI tools (2024: 76 percent), but only 29 percent trust the output (2024: 40 percent). 45 percent report that debugging AI-generated code takes longer than writing it themselves.

Vibe coding - the practice of creating entire projects without human code review via AI - spreads particularly among less experienced developers, precisely the group least equipped to identify security problems.

For enterprises, this creates a double risk: usage increases, critical review decreases, and the group that trusts AI code most intensely has the least experience to catch errors.

What the UK NCSC Demands, and Why It Matters for Europe

The UK National Cyber Security Centre (NCSC) addressed vibe coding as a central security issue at the RSA Conference on 24 March 2026. The recommendations are directly transferable to European enterprises.

AI-generated code currently poses intolerable risks, but also offers the opportunity to make software more secure than the manual status quo.

Secure-by-Default

Build security mechanisms directly into AI coding tools, not as an afterthought

Trust but Verify

Treat AI-generated code as untrusted by default and review it systematically

Deterministic Controls

Build rule-based security checks around AI code instead of AI-controls-AI approaches

In parallel with the vibe coding debate, another problem emerges: in the first week of March 2026, over 30 vulnerabilities in the Model Context Protocol (MCP) were reported within 60 days. AI infrastructure itself is becoming an attack target.

Relevance for European Enterprises

AI-generated code in critical infrastructure (energy, finance, healthcare) potentially falls under the high-risk category of the EU AI Act . Combined with the NIS2 directive, this creates a regulatory environment that compels enterprises to document and trace AI-generated code.

Challenges and Risks

The situation is more complex than a simple "AI code is insecure." Enterprises face a genuine dilemma between productivity gains and security risks.

The Productivity Dilemma

The business advantages of vibe coding are real. An NCSC example describes a startup that replaced an entire SaaS product with an AI-coded alternative in a few hours. The question is not whether enterprises will use AI coding tools, but how they can do so securely.

The demand that "every AI-generated line must be human-reviewed" already hits practical limits. When half of a codebase is machine-generated, there is simply not enough human capacity for complete reviews.

Shadow AI in Coding

38 percent of employees share confidential company data with unapproved AI systems. In the coding context, this means developers use AI tools outside the official security stack, and the generated code flows unchecked into production systems.

The CVE tracking methodology itself has limitations. Many AI tools leave no metadata in commits. Copilot's inline suggestions are invisible. Open-source projects that rely heavily on vibe coding have hundreds of security advisories, but AI traces are often deliberately removed by authors. The documented 74 CVEs are very likely just the tip of a much larger problem.

What Enterprises Should Do Now

Any organisation deploying AI coding tools needs concrete measures. Waiting for perfect tools is not an option, because adoption is already happening.

Integrate Security Analysis into the AI Coding Pipeline

Apply SAST and DAST tools to AI-generated code before it reaches production. This check must run automatically in the CI/CD pipeline, not as an optional manual step.

Establish a Governance Framework for AI Coding

Define which tools are permitted, which code areas may be AI-generated, and which may not. Security-critical areas such as authentication, cryptography, and database access deserve special rules.

Document and Trace AI-Generated Code

For regulated industries (energy, finance, healthcare), this is not a recommendation but a requirement. The EU AI Act and NIS2 demand transparency. Metadata in commits, labels in issue trackers, and documentation in code reviews create the necessary traceability.

Deterministic Controls Instead of AI-Controls-AI

Adopt the NCSC principle: build rule-based, deterministic security checks around AI-generated code. Do not rely on a second AI model to catch the first model's errors.

Pilot Projects with Measurable Security Metrics

Systematically track the vulnerability rate of AI-generated code, not just productivity gains. Only those who measure both sides can make informed decisions about deploying AI coding tools .

Train Developers in Critical Evaluation

Not in operating the tools, but in critically evaluating their output. Developers must learn to read AI-generated code with the same scepticism they would apply to unreviewed code from an unknown contributor.

AI coding tools are not a safe black box. They are powerful instruments with documented weaknesses. The 74 CVEs from Georgia Tech SSLab, Veracode's stagnant 55 percent security rate, and the NCSC warning send a clear signal: enterprises deploying AI coding tools need a security strategy that goes beyond "we trust the tool." The productivity gains are real. So are the risks.

Further Reading

Frequently Asked Questions

Georgia Tech researchers have documented 74 CVEs directly traced to AI coding tools since May 2025. The most common vulnerabilities include missing input validation, insecure SQL queries via string concatenation, and flawed authentication patterns. Researchers estimate the actual number is 5 to 10 times higher, as many tools leave no metadata traces.

According to Veracode's Spring 2026 study, the security pass rate of AI-generated code sits at around 55 percent, virtually unchanged from two years ago. Reasoning models achieve 70 to 72 percent, which is better but still means every third code snippet contains a vulnerability.

NCSC CEO Richard Horne addressed vibe coding as a central security issue at the RSA Conference 2026. The three core recommendations: build secure-by-default into AI tools, adopt a trust-but-verify approach, and use deterministic architectures instead of AI-controls-AI approaches.

The EU AI Act requires compliance for high-risk AI systems from August 2026. AI-generated code in critical infrastructure such as energy or finance potentially falls into this category. Combined with the NIS2 directive, the requirements for software security are tightening significantly across Europe.

Six concrete measures: integrate SAST/DAST tools into the CI/CD pipeline, establish a governance framework for AI coding, document and trace AI-generated code, build deterministic controls instead of AI-controls-AI, set up pilot projects with measurable security metrics, and train developers in critically evaluating AI output.

Stack Overflow's 2025 Developer Survey shows 84 percent of developers use AI tools, but only 29 percent trust the output (down from 40 percent in 2024). The main reason is growing experience: 66 percent report solutions that are "almost right but not quite," and 45 percent say debugging AI code takes longer than writing it themselves.

According to Georgia Tech SSLab's Vibe Security Radar, Claude Code leads with 49 CVEs (11 critical), followed by GitHub Copilot with 15 CVEs. However, the numbers do not reflect actual risk distribution: Claude Code leaves clear metadata traces in commits, while Copilot's inline suggestions are invisible in commit history.