AI Act Omnibus 2026: Trilogue Failed, August Deadline Stands

The second political trilogue on the AI Act Omnibus collapsed after twelve hours without agreement. As long as the package is not adopted, August 2, 2026 remains legally binding. Roughly 90 days to the deadline, EUR 35 million in possible fines, a follow-up trilogue on May 13, and Germany building its own market surveillance regime in parallel.

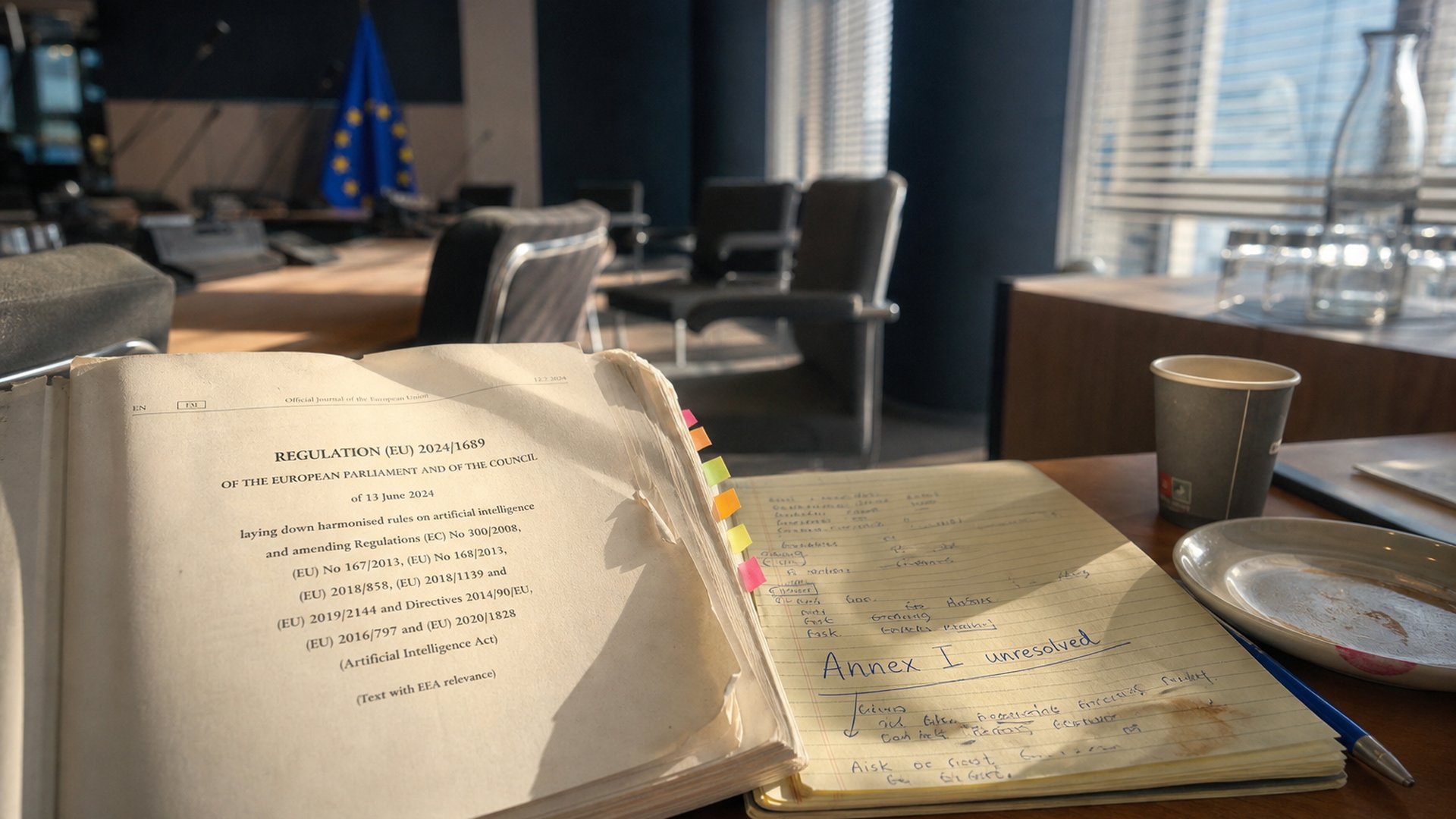

The AI Act Omnibus trilogue on April 28, 2026 ended after roughly twelve hours without agreement. The sticking point was the treatment of AI in regulated products (Annex I). A near-consensus deferral of high-risk obligations to December 2, 2027 was therefore not adopted. Until the Omnibus passes, August 2, 2026 remains the legally binding date for Annex III systems under Articles 9-15 of the AI Act. Germany is meanwhile setting up the Coordination and Competence Centre for the AI Regulation (KoKIVO) at the Bundesnetzagentur via the KI-MIG, with a EUR 500 million SME funding programme. Penalties reach up to EUR 35 million or 7 percent of worldwide annual turnover.

What happened on April 28

The second political trilogue on the AI Act Omnibus between European Parliament, Council, and Commission collapsed on April 28, 2026 after roughly twelve hours of negotiation without agreement. Hopes for a deferral of high-risk obligations are now postponed to mid-May at the earliest, while the original August 2, 2026 deadline keeps moving closer.

State of negotiations: Several elements had already converged before the trilogue: deferral of Annex III to December 2, 2027, deferral of Annex I to August 2, 2028, a ban on non-consensual synthetic intimate imagery, a streamlined Article 49 registration database, and intact GPAI obligations under Articles 50-55. A single unresolved file blocked the entire package.

Negotiators and procedural state

- Parliament lead negotiator: Michael McNamara (Renew Europe, Ireland)

- EPP rapporteur: Kokalari (Sweden)

- Council Presidency: Cyprus (mandate until June 30, 2026)

- Follow-up trilogue scheduled for May 13, 2026

- From July 1, 2026 Lithuania assumes the Council Presidency

- More than 40 organisations had signed an open letter against the proposed weakening before the trilogue

Routing AI governance through sectoral legislation could end up being deregulatory rather than simplifying.

Michael McNamara, Lead negotiator European ParliamentThe sticking point: Annex I and sectoral safety law

The trilogue stalled on a technically dense but politically charged question: should AI systems built into already regulated products as safety components fall under the AI Act in addition to sectoral conformity assessment, or not. Affected categories include medical devices, machinery, toys, and connected vehicles.

Sectors directly affected

- Medical Device Regulation (MDR) - AI in diagnostics, surgical robotics, patient monitoring

- In Vitro Diagnostic Regulation (IVDR) - AI in lab analytics and image interpretation

- Machinery Regulation - AI in industrial and manufacturing automation

- Toy Safety Directive - AI in interactive children's toys

- Vehicle approval - AI components in connected cars

What is legally binding: August 2, 2026 is the real deadline

Until the Omnibus is formally adopted, the originally scheduled applicability date for high-risk AI under Annex III still applies: August 2, 2026. Companies should not treat the deferral to December 2027 as a fact, but as a conditional target.

Practical consequence: Anyone non-compliant on August 3, 2026 risks enforceable violations with fines up to EUR 15 million or 3 percent of worldwide annual turnover. Until formal adoption, the deferral remains speculation.

Obligations under Articles 9-15

Art. 9 Risk management

Lifecycle-based risk management with documented identification, estimation, and mitigation of foreseeable risks.

Art. 10 Data governance

Training, validation, and test datasets must be relevant, representative, and screened for bias.

Art. 11-12 Documentation and logging

Technical documentation per Annex IV, automatic logging of events across the lifecycle.

Art. 13-15 Transparency, oversight, robustness

Meaningful documentation for users, human oversight, accuracy, cybersecurity.

Penalty framework under Article 99

| Violation | Maximum absolute | Maximum percentage |

|---|---|---|

| Prohibited practices (Art. 5) | EUR 35 million | 7 % worldwide annual turnover |

| High-risk violations (Art. 9-15) | EUR 15 million | 3 % worldwide annual turnover |

| Incorrect information to authorities | EUR 7.5 million | 1 % worldwide annual turnover |

Annex III areas

Annex III defines eight high-risk areas: biometrics, critical infrastructure, education and vocational training, employment and workforce management, essential public services and creditworthiness assessment, law enforcement, migration and border management, justice and democratic processes.

German perspective: KI-MIG and KoKIVO at the Bundesnetzagentur

Germany is building its national supervisory architecture in parallel. The federal cabinet adopted the AI Market Surveillance and Innovation Funding Act (KI-MIG) on February 11, 2026. The first Bundestag reading was on March 20, 2026, with the Digital Affairs Committee leading.

Supervisory architecture in Germany

- Bundesnetzagentur (BNetzA): KoKIVO as central coordinator

- BaFin: oversight of AI in financial services and insurance

- BfDI: data protection aspects under GDPR and AI Act

- BSI: AI in critical infrastructure and cybersecurity

- Federal states and sectoral authorities: industry-specific supervision

Funding for the mid-market

The German government has launched a EUR 500 million compliance funding programme running until 2028 for SMEs with up to 500 employees. It covers up to 50 percent of compliance costs and a maximum of EUR 250,000 per company. Applications go through the Federal Ministry for Digital Affairs.

Status of the harmonised standards

CEN/CENELEC originally aimed to deliver the technical standards for conformity demonstration by mid-2025. The target is now end of 2026, opening a gap between applicability date and the full standards stack. Without finished standards there is no presumption of conformity; providers must demonstrate compliance individually and at higher cost.

Reactions: Bitkom warns, AlgorithmWatch criticises

Industry reaction is split. Bitkom warns of annual compliance costs of up to EUR 20 billion and an innovation drag on Germany's mid-market. Civil society sees the package as already too watered down.

Bitkom data on exposure

Sources: Bitkom Annual Study 2026 (February), Deloitte/YouGov survey of 500 German managers (March-April 2026).

Civil society criticism

On April 17, 2026 AlgorithmWatch documented that Microsoft proposals on data centre energy transparency had been adopted near-verbatim into EU policy. The investigation suggests industry interests have systematically shaped the AI Act process. More background in our Microsoft EU data centre lobby article .

Key compliance barriers

- Data security and compliance concerns: 33 percent

- Compliance talent shortage: 27 percent

- High costs for AI software and audit tools: 26 percent

- 1.5 high-risk systems on average per affected company

Challenges and risks

The failed Omnibus trilogue leaves a planning high-risk situation for providers and deployers. Anyone betting on the deferral risks enforceable violations from August 3, 2026 - and the deferral is not yet adopted.

Standards gap

Without finished harmonised standards there is no presumption of conformity. Conformity assessment must be demonstrated individually with notified bodies and detailed technical documentation.

Member state gap

19 of 27 EU states have not yet designated a Single Contact Point (March 2026). Cross-border providers do not know which authority to address.

Presidency change

When Lithuania takes over the Council Presidency on July 1, 2026, the negotiation effectively restarts politically. Four weeks before applicability is a serious risk.

Four scenarios for the path forward

| Scenario | Likelihood | Outcome |

|---|---|---|

| Quick agreement in May | 50 % | Compromise on Annex I, deferral takes effect |

| Closure under Lithuania Q3 | 25 % | Deal reached over summer, just before August deadline |

| Split package | 15 % | Annex III deferred separately, Annex I unresolved |

| Stalemate to August 2, 2026 | 10 % | Original deadlines become enforceable |

Source: Modulos AI estimate, April 2026.

What companies should do now

Treat August 2, 2026 as binding. If the deferral comes, you have gained time. If it does not come, you are prepared and can use compliance maturity as a competitive argument.

-

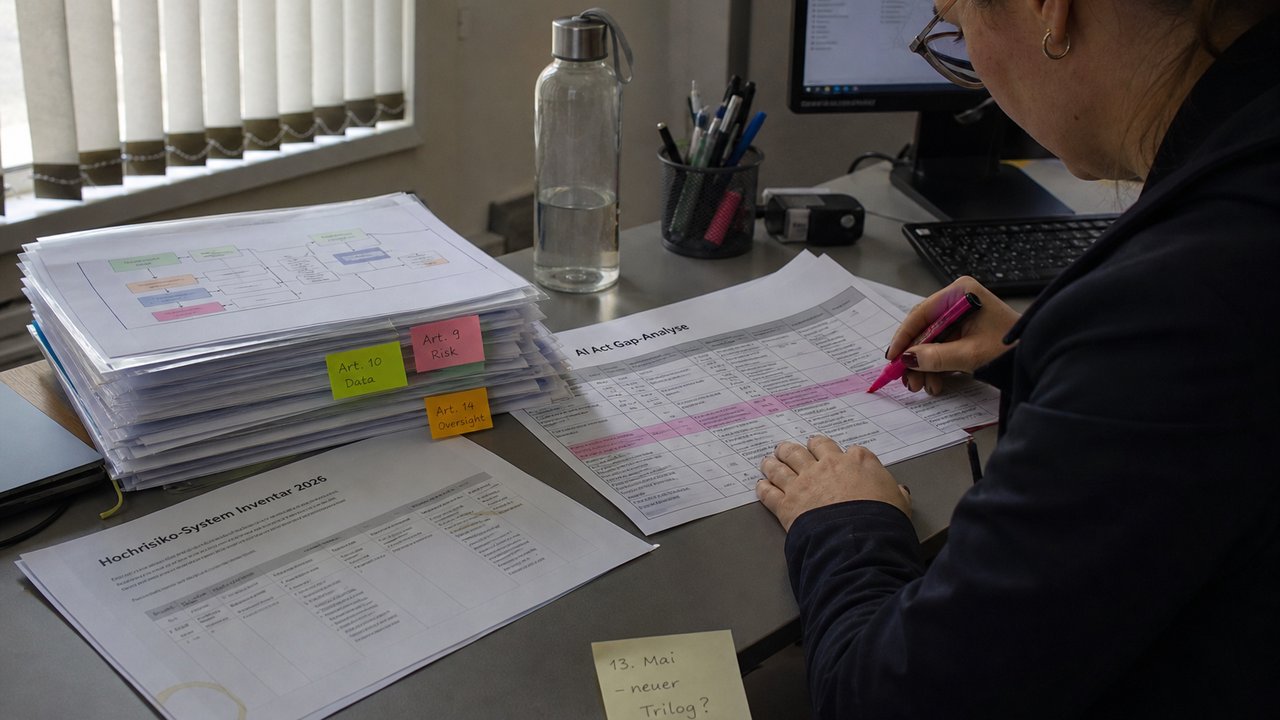

This week: inventory and accountability

Inventory all AI systems in use and in development. Classify against Annex III. Designate an accountable person in writing with a clearly assigned role. More than half of all companies still have no systematic AI inventory.

-

This month: gap analysis and notified bodies

Conduct a gap analysis against Articles 9-15 for every high-risk candidate. Verify your GPAI readiness (Art. 50-55) even if you only deploy. Engage notified bodies for Annex I products - their waiting lists are filling up.

-

This quarter: pipeline and governance

Build your Article 49 registration pipeline. Implement disclosure engineering for synthetic content. Anchor governance to ISO 42001. Use the AI Regulation Matrix as reference.

-

Funding check

The German EUR 500 million programme is open to SMEs up to 500 employees and funds up to 50 percent of compliance costs, capped at EUR 250,000 per company. Applications go through the Federal Ministry for Digital Affairs.

Strategy tip: Treat compliance maturity not as a cost centre but as a differentiator in B2B sales. Buyers and investors are asking for AI governance evidence in 2026. Demonstrating Article 9-15 conformity earns trust competitors still need to build.

What comes next

The follow-up trilogue on May 13, 2026 is the next milestone. After that the calendar tightens: between presidency change, summer break, and August 2, very little time remains.

Follow-up trilogue

Cypriot Presidency attempts a renewed compromise on Annex I.

End of Cypriot Presidency

Last regular session month under the current presidency.

Lithuania takes over

New Council Presidency, new political dynamic, same deadline.

Annex III applicability begins

Original deadline for high-risk obligations Art. 9-15. Penalties become enforceable.

Proposed Annex III deferral

Conditional date, dependent on Omnibus adoption.

Further reading

Frequently asked questions

The AI Act Omnibus is a proposed EU amendment package that would defer the AI Act's high-risk applicability dates. Annex III standalone systems would move to December 2, 2027, Annex I products to August 2, 2028. As long as the package is not adopted, August 2, 2026 remains legally binding.

The second political trilogue between European Parliament, Council, and Commission collapsed after roughly twelve hours of negotiation without agreement. The sticking point was the treatment of AI in regulated products under Annex I. A follow-up trilogue is scheduled for May 13, 2026.

Until the Omnibus is formally adopted, August 2, 2026 remains the legally binding deadline for high-risk obligations under Annex III of the AI Act. Companies should not treat the postponement as a given and should target compliance by early August 2026.

Up to EUR 35 million or 7 percent of worldwide annual turnover for prohibited AI practices under Article 5, up to EUR 15 million or 3 percent for high-risk violations, up to EUR 7.5 million or 1 percent for incorrect information to authorities. Severity and duration determine the actual fine.

The Bundesnetzagentur hosts the Coordination and Competence Centre for the AI Regulation (KoKIVO). Sectoral supervisors such as BaFin (financial services), BfDI (data protection), and BSI (critical infrastructure) keep their existing scope. The German cabinet adopted the AI Market Surveillance and Innovation Funding Act (KI-MIG) on February 11, 2026.

Germany has launched a EUR 500 million compliance funding programme running until 2028 for SMEs with up to 500 employees. It covers up to 50 percent of compliance costs, capped at EUR 250,000 per company. Eligibility requires using at least one high-risk system.

Annex III defines eight high-risk areas: biometrics, critical infrastructure, education and vocational training, employment and workforce management, essential public services and creditworthiness assessment, law enforcement, migration and border management, justice and democratic processes.